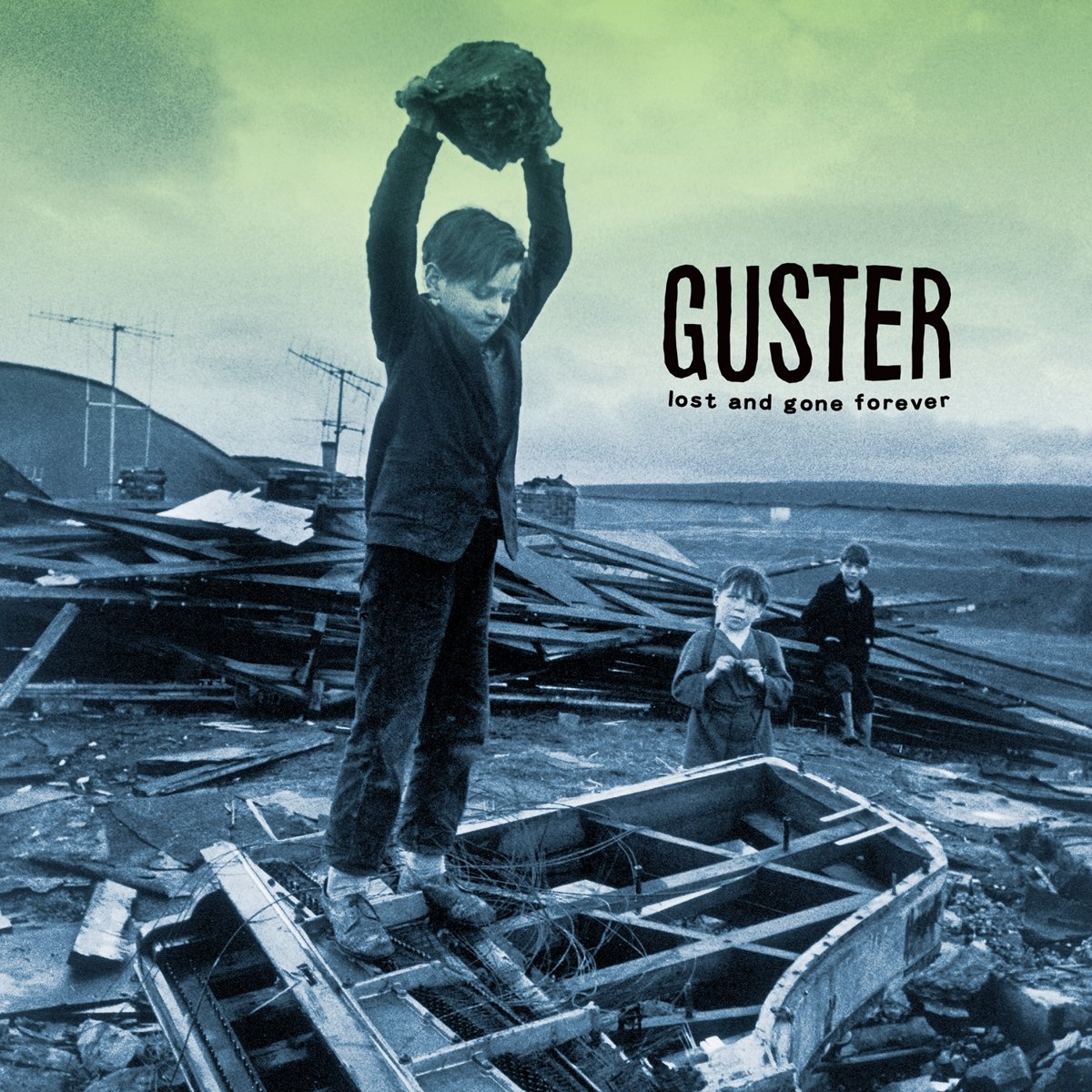

Converges nate newtons favorite albums

Morgan Kaufmann Publishers Inc., San Francisco, CA, USA (2001) Jin, Y., Olhofer, M., Sendhoff, B.: Dynamic weighted aggregation for evolutionary multi-objective optimization: Why does it work and how? In: Proceedings of the 3rd Annual Conference on Genetic and Evolutionary Computation, GECCO’01, pp. Huband, S., Hingston, P., Barone, L., While, L.: A review of multiobjective test problems and a scalable test problem toolkit. Hillermeier, C.: Generalized homotopy approach to multiobjective optimization. Graña Drummond, L.M., Svaiter, B.F.: A steepest descent method for vector optimization. Graña Drummond, L.M., Raupp, F.M.P., Svaiter, B.F.: A quadratically convergent Newton method for vector optimization. Graña Drummond, L.M., Iusem, A.N.: A projected gradient method for vector optimization problems. Gonçalves, M.L.N., Prudente, L.F.: On the extension of the Hager–Zhang conjugate gradient method for vector optimization. Geoffrion, A.M.: Proper efficiency and the theory of vector maximization. 51(3), 479–494 (2000)įukuda, E.H., Graña Drummond, L.M.: Inexact projected gradient method for vector optimization. 20(2), 602–626 (2009)įliege, J., Svaiter, B.F.: Steepest descent methods for multicriteria optimization. 13(6), 1365–1379 (2019)įliege, J., Graña Drummond, L.M., Svaiter, B.F.: Newton’s method for multiobjective optimization. 91(2), 201–213 (2002)įazzio, N.S., Schuverdt, M.L.: Convergence analysis of a nonmonotone projected gradient method for multiobjective optimization problems.

8(3), 631–657 (1998)ĭolan, E.D., Moré, J.J.: Benchmarking optimization software with performance profiles. 138(1–2), 501–530 (2013)ĭas, I., Dennis, J.: Normal-boundary intersection: a new method for generating the Pareto surface in nonlinear multicriteria optimization problems. ĭai, Y.H.: Convergence properties of the BFGS algorithm. 15(4), 953–970 (2005)Ĭustódio, A.L., Madeira, J.F.A., Vaz, A.I.F., Vicente, L.N.: Direct multisearch for multiobjective optimization. SIAM, Philadelphia (2014)īonnel, H., Iusem, A.N., Svaiter, B.F.: Proximal methods in vector optimization. īirgin, E., Martinez, J.: Practical Augmented Lagrangian Methods for Constrained Optimization. Optimization 64(11), 2289–2306 (2015)Īssunção, P.B., Ferreira, O.P., Prudente, L.F.: Conditional gradient method for multiobjective optimization. SIAM, Philadelphia (1999)Īnsary, M.A., Panda, G.: A modified Quasi-Newton method for vector optimization problem. Numerical experiments illustrating the practical advantages of the proposed Newton-type schemes are presented.Īnderson, E., Bai, Z., Bischof, C., Blackford, S., Demmel, J., Dongarra, J., Du Croz, J., Greenbaum, A., Hammarling, S., McKenney, A., Sorensen, D.: LAPACK Users’ Guide, 3rd edn. The global convergences of the aforementioned methods are based, first, on presenting and establishing the global convergence of a general algorithm and, then, showing that the new methods fall in this general algorithm.

For our first Newton-type method, it is also shown that, under convexity assumptions, the local superlinear rate of convergence (or quadratic, in the case where the Hessians of the objectives are Lipschitz continuous) to a local efficient point of the given problem is recovered. In order to fulfill the demanded safeguard conditions on the search directions of Newton-type methods, we adopt the technique in which the Hessians are modified, if necessary, by adding multiples of the identity. These strategies are associated to the conditions that prevent, at each iteration, the search direction to be too close to orthogonality with the multiobjective steepest descent direction and require a proportionality between the lengths of such directions. One of the key points of our approaches is to impose some safeguard strategies on the search directions. The first is directly inspired by the Newton method designed to solve convex problems, whereas the second uses second-order information of the objective functions with ingredients of the steepest descent method. We propose two Newton-type methods for solving (possibly) nonconvex unconstrained multiobjective optimization problems.